Asking Big Questions About the Big Deal: AI

John Q. Todd

Sr. Business Consultant/Product Researcher TRM

February 2, 2026

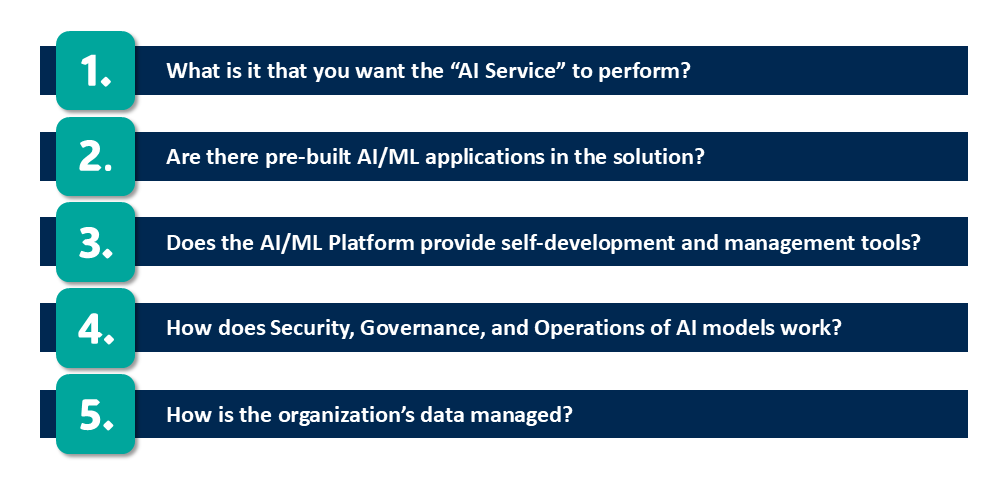

Five Questions to Ask Your AI/ML Vendor… and Yourself!

Artificial intelligence and machine learning are everywhere! Yet it can be very difficult to cut through the marketing hype to get down to the actual feature/functionality that embedded AI/ML tools deliver to users and the organization. How can you say, “Yes,” when it is not clear what the AI/ML tools do. Also, how would they truly benefit the organization?

Part of the difficulty is because of the new words that need to be learned and how they translate into the day-to-day activities of the workforce. Let’s do a little iterative training of your large mental generative model in the hopes of increasing the effectiveness of your prompts. 😉

In the context of an enterprise-level asset management (EAM) system such as IBM Maximo Application Suite, Artificial Intelligence (AI) refers to the integration of AI technologies such as Large Language Models (LLM) machine learning, natural language processing, and predictive analytics to transform the traditional EAM from a static record-keeping tool into an intelligent, proactive, and decision-making engine.

Let’s stop right here and define a few concepts:

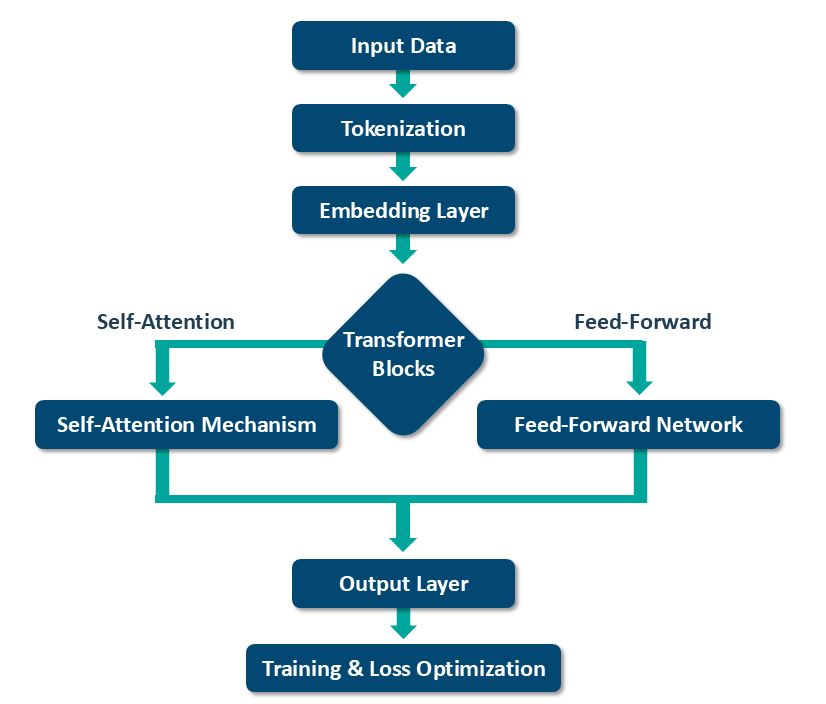

Large Language model

Behind the scenes of any chat or assistant application is one or more LLMs. These models may be developed for specific tasks, or more generically to respond to user’s “prompts.” Initially they were focused on text interactions, but now they can generate images, audio, and even 3D mesh. There are many currently available, and the development of LLMs is happening at an exponential rate. Think of them as ginormous repositories of information that is available to the application and the user. They may reside behind an “AI Service” that the EAM is accessing (such as IBM watsonx) or they could be deployed locally.

Prompts

These are the “questions” a user (or an application) asks of the AI Service/Model. Examples range from, “Draw an image of cats on the surface of the moon lounging around a pool,” to, “List the work orders in a complete status where the scheduled start and end dates were off from the actual start and end dates by more than 4 days.” The skill here for users is to refine their abilities to query the system to get the information they need. Yes, a user may also speak their prompt, and certainly an application may be designed to prompt for the user depending on what actions the user is taking. A good example of this is when an AI Service makes suggestions to the user as they are filling out a form.

Natural language processing

This is the ever-evolving ability of “AI Services” to respond to prompts from users and applications. Speech recognition, optical character recognition, and text to speech are a few of the capabilities of NLP. The notion here is that the interaction a human may have with the AI Service “feels” increasingly natural. This makes applications that leverage this ability more easily approached even by non-technical users.

Predictive analytics

Historical data (text, images, video, etc.) is used in statistical models and by data mining techniques to predict potential outcomes in the future with degrees of confidence. Patterns and correlations in the data that might be difficult for traditional methods to determine are exposed for decision-making. Predictions are only as good as the underlying historical data, but techniques exist to augment the data sets, making the confidence levels higher and the outcomes more useful.

We haven’t even started the article yet! Yes, this is a new area to learn about, so it is best to understand the terms as we begin, reducing the assumptions and misinformation. There are many more terms to learn, and you will certainly encounter them in the rest of this article. Have your favorite search engine or AI assistant available!

Let’s get to the questions…

1. What is it that you want the “AI Service” to perform? (And/or “Want it to do?”)

There is no question that AI solutions claim to offer new and more efficient ways to get work done. But what is it that your organization needs? What may seem cool in a demo might simply not be practical. Or, even better, because of a demonstration, an idea or two may come up that can be implemented with the development tools provided by the solution provider.

There is tension between “what the tool can do for us,” vs. “what we think we need the tool to do for us.” Some out of the box functionality may enable new ways of working, while other features may not yet be useful in your specific context. What might be cool and needle-moving in one industry might not make any sense in another.

Some typical out of box functions that an AI Service can provide:

- Making suggestions for field choices to a user while they are filling out a form or developing a record

- Record intelligence such as warning of duplicate records based upon past and current data

- Auto-generate foundational analyses (such as FMEA) based upon a library of failure codes and their remedies. (IBM Reliability Strategies is an example)

- Organizing, summarizing, collecting record sets based upon user prompts

- Anomaly detection and suggesting next-best actions

- Predicting, with degrees of confidence, future outcomes or system conditions based upon historical data, then suggesting actions

- Optimizing potential work schedules

- Searching for documents in a repository either based upon user intents or raw prompts

A fair amount of time needs to be taken to resolve the “can do,” vs. “want it to do,” tension before deciding. It will take several demos of live products to draw a picture of how to proceed and what proof of concepts might look like.

2. Are there pre-built AI/ML applications in the solution?

Most application sets (i.e., bundled products or solution suites) on the market today are not necessarily “new,” in that they were designed exclusively around the power of AI/ML. Rather, specific components or features have been integrated with “AI Services” to deliver specific features/functions to users.

The distinction to be aware of is: Are there specific, pre-built AI/ML applications in the solution, or are there AI features/functions that exist within individual modules? Both have equal value. An application does not need to be “fully AI,” if new features/functions have been added to help the user be more efficient.

Regardless of how these AI/ML features are integrated into the user interface, do they feel like a seamless part of the system or a separate, disconnected tool? It really does not matter if the users benefit from their use. In general, users are granted the use of the AI/ML functions and applications via a security setting. There may be some users who simply do not need to use these tools, so their experience with the application remains as before.

However, be prepared for some of these AI/ML features and applications to require specialized technical skills (e.g., data science knowledge) to use and interpret results. In some contexts, the application has been designed with the “business user,” in mind. Yet the nature of a prediction or analysis applications will require domain knowledge that might not be widespread in the organization. This is especially true in algorithms, formulas, or AI model development and management, discussed later in this article.

Examples of AI/ML specific applications are anything related to prediction and chat-bot/assistant windows. These have been designed from the ground up to deliver AI/ML functionality. In the case of some traditional applications, the AI/ML functionality has been delivered as additional actions or even automated suggestions made available to the typical EAM user.

It is important to differentiate between the different types of AI features and applications. For example:

Predictive Analytics:

These are the more sophisticated sets of applications. While they may come with pre-built models, they require access to underlying data to process at some frequency. Further, interpreting their results and adjusting the models or the data will require specialized skills. Predicting equipment failures, seasonal cash flow, supplier risk, labor shortages and the like all require well understood models and incoming data. No matter the efficacy of the data and the model, the results are presented with confidence levels to then base decisions upon. If results come with 30% confidence, both the model and the data might need refinement. ‘Care and feeding’ by qualified individuals are a requirement. IBM MAS Predict is an example of a comprehensive prediction system, using EAM data, pre-defined models, and external data to render results.

Generative AI:

Based upon user prompts and application logic, EAM systems typically present results as lists of records, summaries, totals, and other calculations. Given that an EAM system is largely a data collection and reporting engine, generative AI has become a portal for easier reporting and analysis for users. An additional benefit of generative AI is that EAM navigation across multiple applications in the suite is much easier. Instead of users manually locating the appropriate application, the AI assistant guides the user to the applications. IBM Maximo Assistant is an example of generative AI at work as an intelligent chatbot and assistant within an EAM system.

Further, voice activated/responsive systems can be developed using other services or “cartridges” from the solution vendor. Generative AI is a growing area of activity as more use cases of GenAI in EAM systems are explored by clients and vendors alike.

Over time, the intents of the user community are captured and added to the underlying assistant model. This then leads to the system making action suggestions to users. It is very possible for the assistant to ask to start the creation of a new record for the user based upon what the user is doing at the time.

Prescriptive Recommendations:

Using data provided by the organization, the underlying models make suggestions to users while they are filling out forms or creating new records in an EAM system. For example, if a representative set of work orders has been used to train a model about certain equipment failures, when a user is performing a failure report, the AI Service can suggest which options to choose. Of course, the user can choose whichever they wish if so enabled, but they do get a list of choices, each with confidence from the model. As more representative work orders are added to the trained model, the confidence levels of the suggestions will increase over time.

In this case, many EAM vendors have made the ‘care and feeding’ for these models to be more approachable by the typical user. Built-in tools are provided to gather representative records (such as work orders) to then use to further train the models. Therefore, no special skills are needed. Of course, given the provided tools as in a Predictive solution, prescriptive models can be built for any area of the EAM including purchasing, inventory, and any record processing that the organization needs.

3. Does the AI/ML Platform provide self-development and management tools?

Organizations logically move to customize or fine-tune pre-built models and begin using their own proprietary data. This is not always a simple process and raises important questions about an organization’s readiness and ability to perform its own AI/ML development. Even if a solution provides tools to build, train, and deploy machine learning models, those tools have limited value if the organization lacks the necessary skills—unless it plans to outsource the work.

It is good to know ahead of time what specific tools are included (e.g., Jupyter notebook updating, model registries, data analysis pipelines), or can be added over time (voice recognition, analysis tools, etc.). AI/ML development tools, such as IBM watsonx are becoming more approachable by a typical EAM administrator, but they might not be so appealing to a field maintenance or reliability engineer. Understanding the scope of the tools that are included, what can be added, as well as the available support/services, is very important when defining the roadmap.

Sources of Data

The sources of underlying data are also important. Pre-built models/LLM will typically have a fixed data set that is not updated directly. Of course, if the organization brings their own models or has them developed, they can be updated and used for other purposes. Most pre-built models remain in the state that they we released to ensure the model remains consistent with the privacy and governance the originator had in place.

Location of Models

The physical location of the models and the services that provide them to the user applications are varied. In many cases, the AI functionality reaches back to a hosted solution via a “service” link that may or may not be shared with other clients. In other cases, the service is installed locally on client servers for the exclusive use of that organization. Also, Hybrid solutions exist where the EAM solution is hosted in one manner, and the AI service/models are located elsewhere. In the end, the AI Service needs to be run somewhere, and the consuming EAM system and Users need access to it. This is also true from a development perspective where tools may be located locally, and the models being refined/republished are on remote systems.

4. How does Security, Governance, and Operations of AI models work?

These aspects of an EAM system that is using models and/or an AI service can cause difficulty in convincing stakeholders that the organization’s data is secure and is being used in a manner that benefits the business. Where the models come from, reside, and how they are used and refined makes a big difference as to the answers to this question.

Data Governance:

If the model has been provided by the vendor and is not being changed after deployment, then the training data has been curated, and no further governance is needed. The vendor has made the effort to ensure the quality and integrity of the data used for training and inference. However, if the organization is performing their own model training and development, then they are responsible for data governance. Aspects such as data cataloging, lineage tracking, and access control to ensure the data used for AI is secure and compliant is on the client. The EAM/AI vendor may provide tools to assist with this. (IBM watsonx.governance is one example)

Model Environment:

Understanding where the AI models are accessed and trained is critical to due to the numerous options. If the models are enclosed or pre-built in the EAM environment, either on-prem or in the cloud, then the level of security of that environment applies to the models as well. If, however, the EAM is reaching out to an AI service via a link or a secure tunnel, then the security of that distant computing environment (and the link!) provides coverage. Yes, organizational data can and will be “sent out” to the remote models in this case for processing… and then results returned to the requestor.

Model Maintenance:

In general, a provided model is fixed at a point in time and is not updated by the vendor. If, however, the client (or an entity on their behalf) is updating or retraining the models, then they are responsible for change management and deployment. A good example of this is a visual inspection system/model that is initially trained with images looking for certain objects. Over time, new inspections are performed, and those images are used to refine the training of the underlying models. After training, the model is redeployed, and that version is used operationally. The management of this process is up to whomever is performing these tasks.

Model Drift:

A strategy for handling model drift (when a model’s accuracy degrades over time as real-world data changes) should be defined by those responsible. This includes ongoing monitoring, clear performance thresholds, and processes for retraining or updating the model as needed.

Reliability of the AI service:

As with any critical business service, behind the scenes is a set of computing devices that must provide the desired “uptime.” Based upon established SLAs, whoever is responsible for the computing environment will be the party to ensure reliability. A challenge may arise when the EAM system is hosted by one entity while the AI Service is provided by another. In this scenario, the EAM may be operational, but the AI Service may be down, making those feature/functions in the EAM unavailable to users. This risk may also extend to AI development tools if they are delivered from a separate environment than the EAM.

5. How is the organization’s data managed?

This is a similar discussion to the one above on models. In the end, organizational data is being processed through the AI service(s) and models, wherever they are located. It is conceivable that the EAM (source of data) is in the cloud, while the AI Service is coming from another cloud provider, and the results end up distributed across the enterprise in a variety of forms. Every step of the way, organizational data security and privacy are critical. Some elements of this topic to consider:

Data Ingestion:

What is the organization’s process of collecting and ingesting data from various sources to feed the AI models? (e.g., core EAM modules, third-party systems, IoT devices) Who has the authority to introduce new data to the models?

Data Quality:

What tools and processes are in place to ensure data quality and integrity prior to being used for training models? Aspects such as data cleansing, transformation, de-duplication, and standardization are necessary to ensure the training process is efficient and does not return with multiple errors. Tools such as IBM watsonx.data have a wide range of tools and methods to prepare data from multiple sources for training models.

Wrap up

Whew! 5 simple questions that expose significant details that need to be considered and then addressed as an organization deploys “AI.” Artificial intelligence is unique in that unlike other technological endeavors; it is a bit like adding an employee to the organization. They need training, must be granted access to secure company data, it takes a while to trust them, and produce results that may need review prior to use or distribution. It is this “human” element that makes AI deployment across an organization require some different considerations than we have had in the past.

TRM assists clients across multiple industries in deploying technology solutions that improve operational performance and equipment reliability. Our team stays ahead of available technologies while applying hands-on experience to determine their appropriate use. The result is a practical, well-defined roadmap that is achievable and delivers measurable organizational benefits. We use AI in our own business, and are the highest level of partner with IBM, a world-class provider of all things AI.

Contact one of our Senior Business Consultants at askTRM@trmgroup.com

Follow John Q. Todd on LinkedIn for more insights on reliability, availability, and practical asset management.

Ready to elevate your asset management?

Connect with TRM to start your journey toward exceptional performance.

Related Resources

Explore insights, guides, and tools designed to help you unlock greater asset management performance and business value.

Unlock smarter

asset management

Ready to elevate your asset management?

Connect with TRM to start your journey toward

exceptional performance.